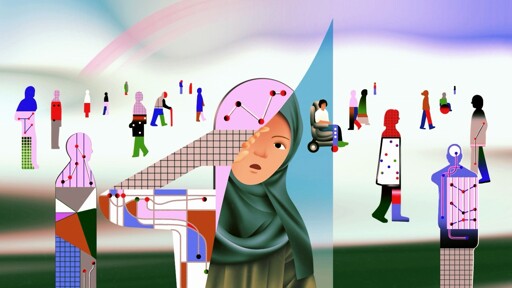

The Danish welfare authority, Udbetaling Danmark (UDK), risks discriminating against people with disabilities, low-income individuals, migrants, refugees, and marginalized racial groups through its use of artificial intelligence (AI) tools to flag individuals for social benefits fraud investigations, Amnesty International said today in a new report.

The report, Coded Injustice: Surveillance and Discrimination in Denmark’s Automated, details how the sweeping use of fraud detection algorithms, paired with mass surveillance practices, has led people to unwillingly –or even unknowingly– forfeit their right to privacy, and created an atmosphere of fear.

“People in non-traditional living arrangements — such as those with disabilities who are married but who live apart due to their disabilities; older people in relationships who live apart; or those living in a multi-generational household, a common arrangement in migrant communities — are all at risk of being targeted by the Really Single algorithm for further investigation into social benefits fraud,” said Hellen Mukiri-Smith.

UDK and ATP also use inputs related to “foreign affiliation” in its algorithmic models. (…) The research finds that this approach discriminates against people based on factors such as national origin and migration status.

Amnesty International also urges the European Commission to clarify, in its AI Act guidance, which AI practices count as social scoring, addressing concerns [raised by civil society](https://www.hrw.org/news/2023/10/09/eu-artificial-intelligence-regulation-should-ban-social-scoring#%3A~%3Atext=(Brussels%2C+October+9%2C+2023%2Cregulation's+prohibition+on+social+scoring.]"+said+HMS.).