Microsoft’s LinkedIn will update its User Agreement next month with a warning that it may show users generative AI content that’s inaccurate or misleading.

[…]

]The relevant passage, which takes effect on November 20, 2024, reads:

Generative AI Features: By using the Services, you may interact with features we offer that automate content generation for you. The content that is generated might be inaccurate, incomplete, delayed, misleading or not suitable for your purposes. Please review and edit such content before sharing with others. Like all content you share on our Services, you are responsible for ensuring it complies with our Professional Community Policies, including not sharing misleading information.

In short, LinkedIn will provide features that can produce automated content, but that content may be inaccurate. Users are expected to review and correct false information before sharing said content, because LinkedIn won’t be held responsible for any consequences.

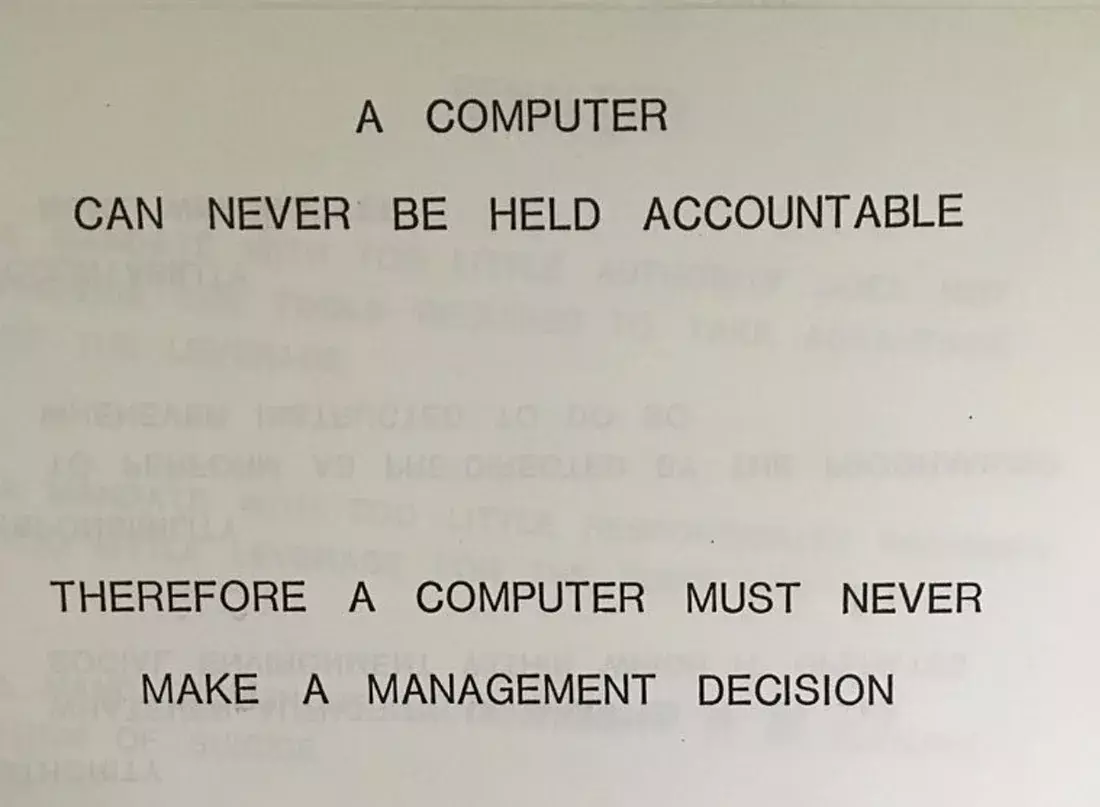

As IBM said in 1979, computers aren’t accountable, and I would go further and say they should never make any meaningful decision. The algorithm used doesn’t really make a difference. The sooner people understand that they are responsible for what they do with computers (like any other tool) the better.

The real question is, what if you commission a work from another, and they make you something in a completely automated way. Let’s say a vending machine. Are you responsible for what the vending machine does if you use it as it’s supposed to be used? Or is it the owner of the machine?

Why is it different for LLM text generators?

If I commission a vending machine, get one that was made automatically and runs itself, and I set it up and let it operate in my store, then I am responsible if it eats someone’s money without giving them their item, giving the wrong thing, or dispensing dangerous products.

This has already been decided, and it’s why you can open up and fix them, and each mechanism is controlled.

A llm making business decisions has no such control or safety mechanisms.

A llm making business decisions has no such control or safety mechanisms.

I wouldn’t say that - there’s nothing preventing them from building in (stronger) guardrails and retraining the model based on input.

If it turns out the model suggests someone killing themselves based on very specific input, do you not think they should be held accountable to retrain the model and prevent that from happening again?

From an accountability perspective, there’s no difference from a text generator machine and a soda generating machine.

The owner and builder should be held accountable and thereby put a financial incentive on making these tools more reliable and safer. You don’t hold Tesla not accountable when their self driving kills someone because they didn’t test it enough or build in enough safe guards – that’d be insane.

Stronger guardrails can help, sure. But getting new input and building a new model is the equivalent of replacing the entire vending machine with a different model by the same company if one is failing (by the old analogy).

The problem is that if you do the same thing with a llm for hiring or job systems, then the failure and bias instead is from the model being bigoted, which while illegal, is hidden in a model that is basically trained on how to be a more effective bigot.

You can’t hide your race from the llm that was accidentally trained to know what job histories are traditionally black, or anything else.

At this point, if you’re not double-checking something produced by your AI tool of choice, it absolutely is your fault. It’s no secret that these applications were trained on garbage.

“We will provide you with a tool to emit garbage and a platform to share content. If you put the two together, you are liable.”

Attractive nuisance much? Is it too much to ask that they should have to label it a garbage generator instead of “AI”? Why does honesty always have to take a back seat?

Because then tech would have to admit they’re moving in to a period of stability rather than a period of constant growth.

The big companies and start ups need to prove they’ve still got “revolutionary” potential otherwise the stock values start to drop. And lower stock values means less bonuses for leadership.

Morally speaking I’d blame both sides on this matter - Microsoft/LinkedIn for shoving down generative A"I" where it shouldn’t, and users assumptive/gullible thus harmful enough to take the output at face value.

The only thing I use AI for is generating character art for tabletop portraits and when the well is sufficiently poisoned I will probably go back to Pinterest.

On the one hand, putting absolute faith into an llm and regurgitating anything it says as fact is just stupidity manifest. On the other, holding customers liable for their own removedty llm is hilariously duplicitous.

Maybe, if this is a known issue, LI shouldn’t be pushing this crap on their platform in the first place, yeah? But some higher up already fully bought in to the grift and to pull back now would be admitting they got dupped, which will never happen of course.

Linked in shouldn’t even be an option. It was removed before microsoft bought them (email man in the middle, remember that?) and somehow microsoft made it worse.

I would never touch that platform. Friends don’t let friends use it.

If I didn’t need to have a profile there for work I wouldn’t. I had two jobs that were kind enough to tell me when I asked that they immediately passed on me because my resume had no LinkedIn or Facebook, and I deleted my Facebook a year ago.

That sucks. And the only way to fix it is to get people not to play.

I am on hiring committees for a large firm for it positions. When people put their linked in on their resumes, I see it as a negative. If they can’t value their personal data, I don’t see how they can value my companies.

That just makes job hunting suck even more. Now you need to also check whether LinkedIn gets you hired or passed on.

Your merits matter most to me. But we all need to get the hell away from that platform.

You may want to opt out of those services. Even LinkedIn seems to know it’s got potential to be a flaming hot train wreck, apparently to the point where they desire no responsibility for the public messages made by their machine that they own, train, and qc.